Two prior posts worked through the statistics of the SB 6346 sign-in data. In the first I established the methodology and the finding. After applying a birthday-corrected collision test to separate organic participation from anomalous windows, roughly 90,000 legitimate CON participants remain against roughly 9,100 legitimate PRO participants. In the second I addressed the legislature’s claim that duplicate names make the dataset unreliable. The finding runs the other way. A genuine sample drawn from a real community produces name collisions at a predictable rate. People share surnames, people hit submit twice, households have two people with the same name. The PRO overnight batch produced zero collisions across 934 draws, where the statistical minimum expected is around 30. The anomaly is suspicious precisely because it has too few duplicates, not too many. Real participation is messy. This was not.

This post is not about those results. It is about what legislators said about them at a February 24 media availability, and whether their positions are statistically defensible.

They are not.

The Math Problem With “Not Helping Us Make Decisions”

“It’s not like we are making decisions not to pass a bill because of a sign in… they’re not really helping us make decisions in terms of amendments to bills or whether to pass it out of committee or not. We rely on people who actually come and testify in person.”

— Senator Manka Dhingra, February 24 media availability

That is a statistical claim. It asserts that the sign-in data has no decision-relevant information. For that to be true, one of two things must hold. Either the signal is too noisy to be meaningful, or legislators have better information that makes it redundant.

Neither holds.

The 10:1 ratio across 90,000 legitimate responses is not ambiguous. The margin of error at that sample size is roughly a third of a percentage point. The ratio does not wobble under any standard statistical treatment. Even applying the most aggressive self-selection correction anyone has proposed, assuming CON participants are twice as motivated to engage as PRO participants, the adjusted ratio is still 5:1. The signal does not disappear. Calling it noise is not a statistical judgment. It is a refusal to do the math.

As for better information, what would that be? Testimony at a two-hour hearing. Phone calls. Letters. The intuitions of members who have held their seats for multiple cycles. None of those are more statistically rigorous than 90,000 data points. Most are orders of magnitude less rigorous. If a senator’s read of the room outweighs a dataset this large at a ratio this clear, that is not superior methodology. That is substituting anecdote for evidence.

Dhingra’s preferred alternative, people who show up in person, has its own problem. The photo below is from the February 6 Senate hearing. The room is full of people in matching purple shirts and teal sashes. That is coordinated turnout, organized in advance, by people with the resources and flexibility to get to Olympia on a weekday. It is the physical equivalent of a sign-in campaign, except it requires taking a day off work and driving to the state capitol.

That standard also systematically excludes the people most affected by legislation. A small business owner in Spokane worried about a new tax on their income cannot easily testify on a Wednesday. A nurse working a shift cannot. A retired teacher in Yakima cannot. The sign-in system exists precisely because geographic and economic barriers make in-person participation inaccessible to most Washingtonians. Dismissing sign-ins in favor of in-person testimony is not a quality upgrade. It is a substitution of one self-selected sample for a smaller, more organizationally filtered one.

What Statistically Relevant Engagement Actually Looks Like

A standard poll commissioned to gauge public opinion on a major policy question uses around 1,000 respondents. That produces a margin of error of roughly 3.1% at 95% confidence. Those numbers drive legislation, inform campaign strategy, and get cited on the floor. Nobody demands methodology disclosure before a senator cites a Crosscut poll. That is simply the accepted evidentiary standard for constituent sentiment.

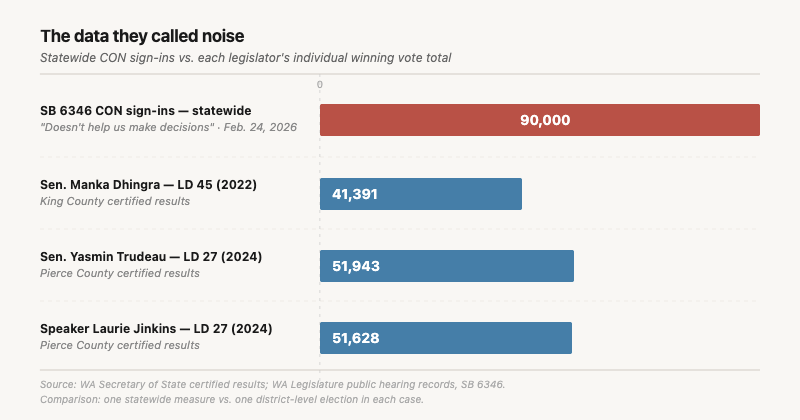

The sign-in dataset, after deduplication, contains roughly 90,000 legitimate CON responses. While strict margin of error calculations require randomized polling rather than opt-in data, the mathematical gravity at this scale is inescapable: a random sample of this size would carry a margin of error of approximately 0.33%. This dataset is ninety times larger than what legislators already treat as a reliable signal, with precision ten times tighter.

Washington has approximately 5.5 million registered voters. Ninety thousand responses represents roughly 1.6% of that population engaging with a single bill in committee. In political science research on constituent contact, engagement rates on individual pieces of legislation are typically measured in fractions of a percent. At 1.6%, this dataset is not a rounding error above that baseline. The prior record for sign-ins on any Washington bill was reportedly around 45,000, itself considered extraordinary. This dataset doubled it, and the legislative website crashed under the volume because nothing in the system’s design anticipated engagement at this scale.

The infrastructure of participation failed because the signal exceeded its design limits. That is not a data quality problem. That is evidence of something real happening in the electorate.

To put 90,000 in electoral terms: Washington has 49 legislative districts. Distributed statewide, that averages roughly 1,800 CON sign-ins per district. The 2024 state Senate race in the 10th district was decided by 153 votes. The House race in the 17th district was decided by fewer than 200. Several competitive seats turned on margins smaller than the number of people in those districts who showed up to oppose this bill. Legislators are not dismissing a fringe signal. They are dismissing a constituency that is, in several of their districts, larger than their margin of victory.

Consider how the same legislators would respond to a poll of 1,000 Washingtonians showing 10:1 opposition to a bill. That finding would be treated as dispositive. It would be cited in floor speeches, appear in press releases, and be described as a clear signal of constituent sentiment. This dataset shows the same ratio at ninety times the sample size, with a margin of error ten times tighter, with an audit trail, with a reproducible methodology, and after removing anomalous windows on both sides.

The legislators who called it noise do not apply that standard to anything else they use.

You Don’t Need to Read the Bill

“I don’t think everyone who’s signing in in support or opposition is actually reading the bill. So I think you got to take it for what it’s worth.”

— Senator Yasmin Trudeau, February 24 media availability

For a technical bill where the title might mislead, that would be a legitimate point. SB 6346 is not that kind of bill. Washington has not had an income tax in nearly a century. Voters have rejected it ten times. For most constituents signing in CON, reading the bill is beside the point. They already know where they stand. The question SB 6346 raises for them is not what the rate structure looks like. It is whether Washington should have an income tax at all, and on that question they have a consistent ninety-year answer. Beyond that settled position, the architects of this legislation documented their strategy in writing years before the bill was introduced.

In April 2018, Senator Jamie Pedersen sent an email to a former Democratic legislator explaining the real value of passing a capital gains tax. The major use of revenue, he wrote, was secondary. The more important benefit was on the legal side. Passing a capital gains tax would give the Supreme Court the opportunity to revisit its decisions that income is property, and would “make it possible to enact a progressive income tax with a simple majority vote.” Those emails were obtained through public records and published by the Washington Policy Center, which also documented the three-step sequence Pedersen described. Pass the capital gains tax to break the legal seal. Pass a millionaires tax to build the administrative infrastructure. Then lower the threshold to capture the middle class.

The capital gains excise passed in 2021. Pedersen also promised the revenue would reduce property and sales taxes. The state collected $1.8 billion in capital gains revenue from 2022 to 2024. Not a dollar went to reducing property or sales taxes. New spending absorbed everything. The Supreme Court upheld the excise in Quinn v. State in 2023, doing precisely what Pedersen predicted. A surcharge was added in 2025. SB 6346 arrived in 2026 as the simple majority vote Pedersen described eight years earlier.

A constituent who signs in CON without reading SB 6346 but who knows this history is not pattern-matching by instinct. They are responding accurately to a documented legislative strategy, now in its final stage, by an architect who wrote down the plan. Trudeau’s concern assumes the sign-in reflects ignorance. The record complicates that assumption.

The federal income tax was introduced in 1913 as a temporary measure with a top rate of 7% on incomes above $500,000. It has been neither temporary nor limited since. A constituent who has watched Washington’s capital gains excise follow the same arc, introduced with tax relief promises that were never kept and expanded within four years, is not being paranoid. They are reading the pattern correctly.

A constituent signing in CON on this bill is not evaluating the mechanics of a 9.9% rate on income above one million dollars. They are evaluating a mechanism with a documented history and a stated long-term purpose. That is not noise. That is the signal working as designed.

The Participation Double Standard

“As a general rule, I always warn my members, you shouldn’t really pay attention to that kind of dialogue… maybe focus less on numbers and more on quality of engagement.”

— Speaker Laurie Jinkins, February 24 media availability

“Quality of engagement” implies that organized participation is lower quality than spontaneous participation. Applied consistently, that standard would disqualify most of what the same legislators celebrate as democratic infrastructure.

Get out the vote campaigns are organized, at scale, through forwarded links, text banking, social media mobilization, and door knocking. They systematically encourage people to act on issues they may not have independently researched. Nobody argues that a voter who was reminded to register by a campaign text is less legitimate than one who showed up spontaneously. Nobody demands that turnout in heavily canvassed precincts be discounted because the participation was encouraged rather than organic.

The asymmetry is hard to explain on principled grounds. Get out the vote efforts are explicitly designed to shape electoral outcomes, which directly determines who holds legislative power. Organized sign-in campaigns are designed to inform legislators of constituent sentiment on a specific bill, which Jinkins then warns her members not to pay attention to anyway. If one is legitimate democratic infrastructure and the other warrants skepticism, that distinction requires an explanation nobody has offered.

The Self-Selection Argument Does Not Save Them

The legitimate version of the dismissal is astroturfing risk. Organized campaigns can mobilize sign-ins that do not reflect organic sentiment. Two problems follow.

First, the statistical work already addresses it. The anomalies I flagged in those prior posts run against the PRO side, not CON. The CON signal carries the messy collision fingerprint consistent with real people. The organized manipulation concern, applied rigorously and symmetrically, strengthens the CON signal rather than undermining it.

Second, self-selection disqualifies nothing legislators already use. Every constituent signal they rely on is self-selected. Calls. Letters. Town hall attendance. Donations. None represent a random sample of the electorate. The sign-in system is being held to an evidentiary standard that almost no feedback mechanism in democratic practice has met, and that standard is applied to nothing else.

What makes the sign-in data different from those signals is not that it is less reliable. It is that it is more systematic. It produces a record. It is auditable. It generated enough volume to run statistical tests on. The methodology applied here would hold up in a peer-reviewed context. The “I talked to my constituents” alternative would not.

For the underlying sentiment to be actually close to even, CON participants would need to be systematically ten times more motivated to engage through this specific channel than PRO participants. That is not a bias correction. That is a complete reversal of the observed signal. No one has offered a mechanism that produces that result.

The legislators dismissing this data are not applying a rigorous evidentiary standard. They are applying a selective one.

The Broader Pattern

In Disdain or Design? I wrote about what happens to constituent input in Washington when institutional actors have decided on an outcome. The user interface of democracy still renders. The buttons are there. What that piece examined is whether the backend those buttons connect to has been rewired.

The sign-in dismissal is that pattern made unusually explicit. When lawmakers assert that sign-in anomalies damage the ‘democratic process,’ the irony is staggering. The legislature already removed the actual democratic process from this bill by attaching an emergency clause, deliberately blocking the public’s ability to challenge it via referendum. They pre-emptively silenced the electoral signal; now legislative leaders are simply stating on camera that the only constituent participation left is not helping them make decisions.

Washington voters have rejected income taxation ten times through the constitutional amendment process. The legislature is effectively circumventing the initiative process that most recently codified that preference. Dismissing the largest constituent response in state legislative history as something members should not pay attention to is not a data science position.

It is a tell about whose input actually shapes the outcome.

The Question That Deserves an Answer

Every signal legislators use to read constituent sentiment is self-selected. Calls. Letters. Town halls. Protests. Donations. Sign-ins are just self-selection at scale, with a paper trail rigorous enough to audit.

It is a perfectly reasonable position to argue that 90,000 highly motivated people clicking a web form do not flawlessly represent the entire state of Washington. But if the legislature genuinely wanted a higher-fidelity democratic signal, they would not have attached an emergency clause to explicitly bypass the voters. And they would not be ignoring a century of bipartisan ballot results where Washingtonians have rejected this exact policy ten separate times.

Legislators are free to make that choice, but voters deserve transparency about it, not a smokescreen of statistical skepticism that the data itself dismantles. When the numbers speak this clearly, ignoring them isn’t methodology; it’s a deliberate unplugging of democracy’s earpiece.