When people think about SSL performance they are normally concerned with the performance impact on the server, specifically they talk about the computational and memory costs of negotiating the SSL session and maintaining the encrypted link. Today though it’s rare for a web server to be CPU or memory bound so this really shouldn’t be a concern, with that said you should still be concerned with SSL performance.

Did you know that at Google SSL accounts for less than 1% of the CPU load, less than 10KB of memory per connection and less than 2% of network overhead?

Why? Because studies have shown that the slower your site is the less people want to use it. I know it’s a little strange that they needed to do studies to figure that out but the upside is we now have some hard figures we can use to put this problem in perspective. One such study was done by Amazon in 2008, in this study they found that every 100ms of latency cost them 1% in sales.

That should be enough to get anyone to pay attention so let’s see what we can do to better understand what can slow SSL down.

Before we go much further on this topic we have to start with what happens when a user visits a page, the process looks something like this:

- Lookup the web servers IP address with DNS

- Create a TCP socket to the web server

- Initiate the SSL session

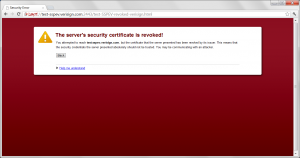

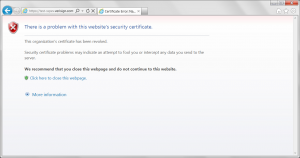

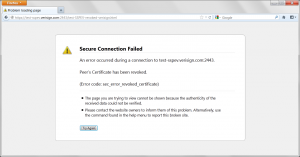

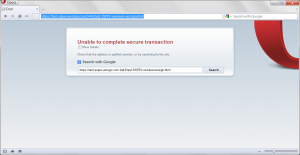

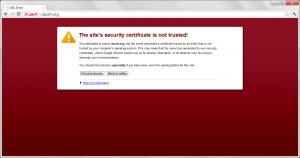

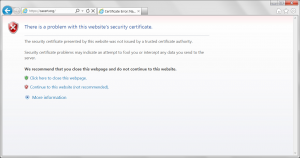

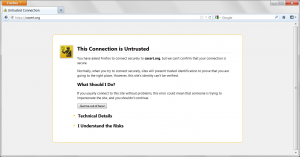

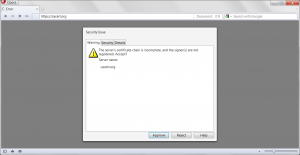

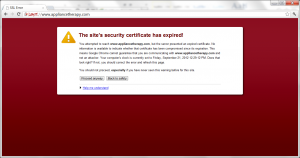

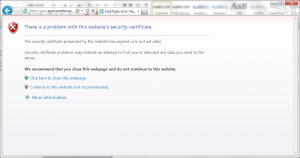

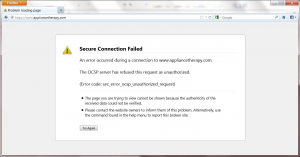

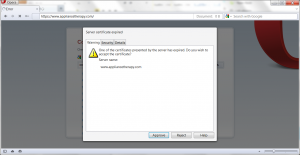

- Validate the certificates provided by the server

- Establish the SSL session

- Send the request content

What’s important to understand is that to a great extent the steps described above tasks happen serially, one right after another – so if they are not optimized they result in a delay to first render.

To make things worse this set of tasks can happen literally dozens if not a hundred times for a given web page, just imagine that processes being repeated for every resource (images, JavaScript, etc.) listed in the initial document.

Web developers have made an art out of optimizing content so that it can be served quickly but often forget about impact of the above, there are lots of things that can be done to reduce the time users wait to get to your content and I want to spend a few minutes discussing them here.

First (and often forgotten) is that you are dependent on the infrastructure of your CA partner, as such you can make your DNS as fast as possible but your still dependent on theirs, you can minify your web content but the browser still needs to validate the certificate you use with the CA you get your certificate from.

These taxes can be quite significant and add up to 1000ms or more.

Second a mis(or lazily)-configured web server is going to result in a slower user experience, there are lots of options that can be configured in TLS that will have a material impact on TLS performance. These can range from the simple certificate related to more advanced SSL options and configuration tweaks.

Finally simple networking concepts and configuration can have a big impact on your SSL performance, from the basic like using a CDN to get the SSL session to terminate as close as possible to the user of your site to the more advanced like tuning TLS record sizes to be more optimum.

Over the next week or so I will be writing posts on each of these topics but in the meantime here are some good resources available to you to learn about some of these problem areas: