One of the most fundamental design principals when designing a secure system is that of least privilege, in the case of CAs one scenario where this can be applied is the subordination of another CA.

The application of this concept in this scenario is referred to as qualified subordination, it was first formalized in the IETF standards for X.509 in 1999 in RFC 2459 through the introduction of the Basic Constraints, (see section 4.2.1.10), Name Constraints (see section 4.2.1.11) and Policy Constraints (see section 4.2.1.11).

Unfortunately broad product support did not begin to emerge until the RFC 3280 was released in 2002.

The development and deployment of these concepts was primarily driven by the US Federal Government’s deployment of PKI as a foundational technology for their security infrastructure. One of the many benefits of the government adopting these concepts was that NIST published a robust Test Suite to validate conformance with their interpretations of RFC 3280 which included extensive coverage of Qualified Subordination.

When these concepts are used together a Root CA is able to delegate the right to issue certificates to another CA while restricting them from creating other CAs or issuing certificates for names they are not authoritative for.

The Federal Bridge made extensive use of these concepts; they were able to do so through the mandate to use software that met the published guidelines. Adoption on the Internet however took much longer given the historically slow adoption rates for browsers, that gladly has changed and there is now sufficient browser support to deploy these restrictions.

In addition Microsoft introduced another mechanism to restrict the scope in which a CA is trusted for, they did this by treating the Extended Key Usage (see section 4.2.1.13) extension as a means to delegate only certain issuance capabilities to a Certificate Authority.

It accomplishes this by using the same logic specified in RFC 3280 for Certificate Policies (see section 4.2.1.5), more specifically it assumes when an issuer lists an Extended Key Usage (such as the one for S/MIME encryption) in a CA certificate that its issuer intended to restrict the usage of that CA to the EKUs present in the certificate. A simplified version of this logic was also adopted by OpenSSL for SSL certificates.

Given the Microsoft behavior is more restrictive than the behavior specified in RFC 3280 it does not break applications that do not support it and allows a CA to restrict behavior even further for clients that use the Windows certificate validation logic (nearly 70% of the deployed browsers today).

Client Compatibility

Most browsers and email clients support these concepts, however unfortunately not all of them support Name Constraints.

Despite that that they all do support honoring the RFC 3280 behavior for critical extensions (see section 4.2), which states:

A certificate using system MUST reject the certificate if it encounters a critical extension it does not recognize

This means by marking the Name Constraints extension Critical those implementations that do not support the concept will “fail-closed”. This means it can be used as an effective way to technically enforce that CAs are not trusted for names they are not authoritative for, it also means that there will be cases where they may be authoritative but clients cant trust the certificates they issue.

This issue can be addressed by not marking the extension Critical, when this is done the clients that understand Name Constraints will continue to honor the policies expressed in it and those that do not will simply ignore the extension.

This is of course a trade-off of security in exchange for compatibility, with that said one with far more positive trade-offs than negative ones.

Specifically this approach means users of clients that do not support the extension are no-worse off than they are without its use and those with support get the additional protection from cases where a subordinate CA has been compromised or is willfully issuing certificates that it is not authoritative for.

With that said, support for Name Constraints is actually quite good as the following table illustrates.

|

Honor Criticality |

Support Basic Constraints |

Supports DNS Name Constraints |

Supports RFC 822 Name Constraints |

Supports Policy Constraints |

Supports constrained EKU |

Successfully enforces |

| IE [1] |

Yes |

Yes |

Yes |

N/A |

Yes |

Yes |

Yes (Open) |

| Outlook [1] |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes (Open) |

| Firefox [1] |

Yes |

Yes |

Yes |

Yes |

Yes |

No |

Yes (Open) |

| Thunderbird [1] |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes (Open) |

| Opera [1] |

Yes |

Yes |

No[2] |

No[2] |

No[2] |

Yes (SSL only) [3] |

Yes (Closed) |

| Windows / Safari [1] |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes (Open) |

| OSX / Safari[4] |

Yes |

Yes |

No[5] |

No[5] |

No[5] |

No |

Yes (Closed) |

What this table shows is:

- It is possible to rely on the Name Constraints extension as an effective enforcement technique if the extension is marked as critical.

- It is possible to rely on the Basic Constraints extension as an effective enforcement technique.

- In the case of Safari and Opera that this success is due to these browsers support of honoring the semantics for critical extensions vs. understanding the Name Constraints extension.

For customers this means if you must interoperate with Opera or Safari (yes even on iPad and iPhone) the use of a certificate with a “Critical” “Name Constraints extension” in it will result in the certificate chain looking invalid.

Thankfully according to StatCounter these represent less than 6% of all browsers on the Internet and antidotal evidence shows almost no use in the enterprise.

With that said most environments business requirements will not allow them to fail even for such a small number, in these environments deploying Name Constraints as a non-critical extension will be required, not 100% of the security benefits are realized with this approach but it does significantly reduce the risk.

In such cases it is recommended that once the remaining legacy clients that do not support Name Constraints have been replaced with more recent versions that do the CAs be re-issued with the extension marked as critical.

[1] Tests on Windows were completed with Windows 7, IE 9.0, Outlook 2007, Safari 5.05, Opera 11.61, Firefox/Thunderbird 10.0.2.

[2] OpenSSL supports name constraints for both name forms as well as policy constraints, Opera has chosen not to enable thee capabilities until demand was present. This work was done in OpenSSL in 2008 as part of a contract to Google.

[3] Opera uses OpenSSL which supports restricting a CA from issuing valid SSL server certificates if it’s parent did not place the SSL EKU in it’s certificate.

[4] Tests on OSX were completed with Lion and Safari 5.05

[5] Safari on the Mac uses the PKITS tests so they are aware of the deficiency in their validation logic, they have not publically stated they will support them but we expect support in the future.

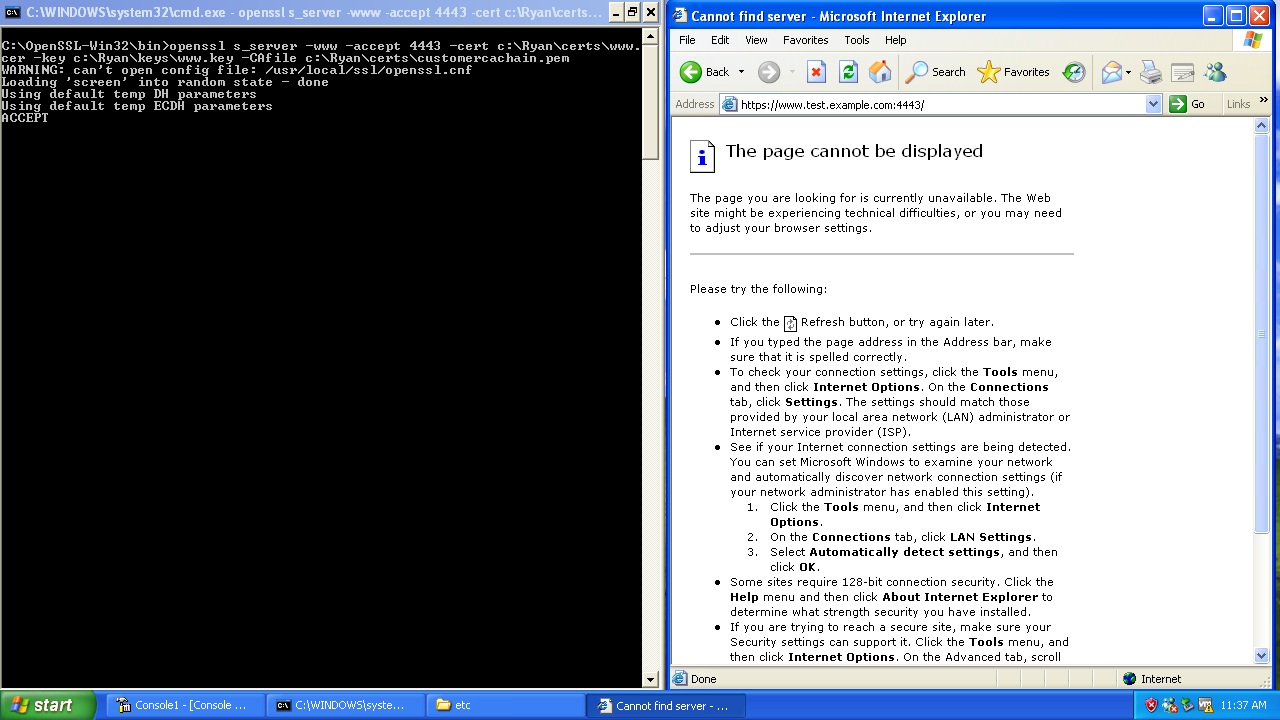

Server Compatibility

If you have server that accepts or validates client certificates you will also care about their support for validating certificates that have these constraints.

Each environment is a little different and the number of server choices one sees in these cases feels limitless at times, as such we are only able to provide more abstract guidance here.

In the case of Windows servers such as IIS the important factor is what version of Windows you are running on as the support for PKI is built into the Windows platform. Applications are most commonly built on this platform when they are designed for Windows and is always the case for Microsoft applications.

The concepts discussed here were all supported since Windows 2003, though there were significant improvements in the 2008 release.

The net of the above is that if your server platform is built on this API you gain support for these concepts, on other platforms it of course depends on which libraries they chose to use for support for certificate validation.