HSMs were designed to protect keys from theft and to move those keys into a different security domain than the code that uses those keys. The workloads using these HSMs use credentials or, worse, shared secrets that are often pushed down to the machines via CI pipelines or at imaging time to authenticate to them. These API keys and credentials are often stored in key vaults like HashiCorp, making them no more secure than the key vault itself. Unfortunately, they’re also seldom rotated. If an attacker gains code execution on the box or gains access to the API keys credentials in some other way, they can sign or encrypt with the keys on the HSM that the associated credentials have access to. The attacker doesn’t even need to stay resident on the box with the key or credential because they are usually just stored in environment variables and files on the box, allowing them to be taken and used later from a network perspective with a line of sight to the HSM.

In short, beyond a simplistic access control model HSMs usually do not protect keys in use; they protect them from theft. To make things worse, since they have no concept of the workload, resulting in the auditing mechanisms they have lacking adequate detail to even usefully monitor the use of keys.

Challenges Using HSMs

By design, the administrative model of HSMs is quite different from what we’re typically used to. The goal of the HSM design was to prevent regular IT staff or third parties with access to the facilities containing the HSMs or those with code execution on the boxes connected to them from being able to abscond with the keys. These use cases were almost always low-volume systems that were infrequently used relative to other workloads. Their performance often becomes a bottleneck. It’s possible to design deployments that can keep up with and meet the availability requirements of large-scale systems, but this often requires deploying clusters of HSMs in every region and every cluster where your workload exists and having your data center staff manage these devices, which were designed around largely manual physical administration.

To make things worse, the only compartmentalization concept these HSMs have is usually the concept of a “slot,” which you can think of as a virtual HSM within that physical HSM. Each one you use increases your operational overhead, resulting in customers often either sharing one slot with many workloads or simply not using the HSMs at all. This often makes the capital and non-capital costs of their use in at-scale systems, as well as their use in less secure cases impractical. In cases where these challenges make HSMs impractical, there are often other approaches that can still help mitigate key compromise risks so it’s not a question of all or nothing.

When HSMs Make Sense

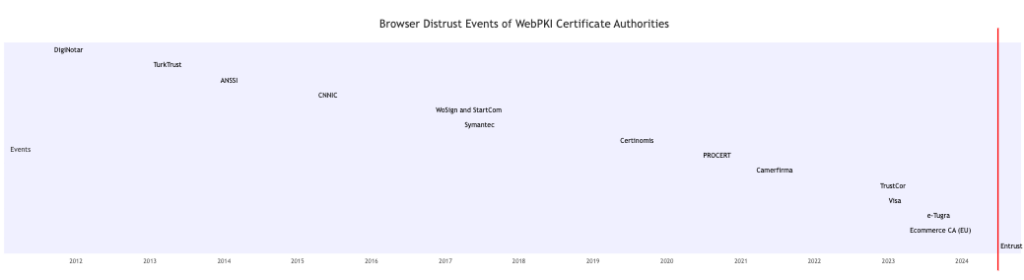

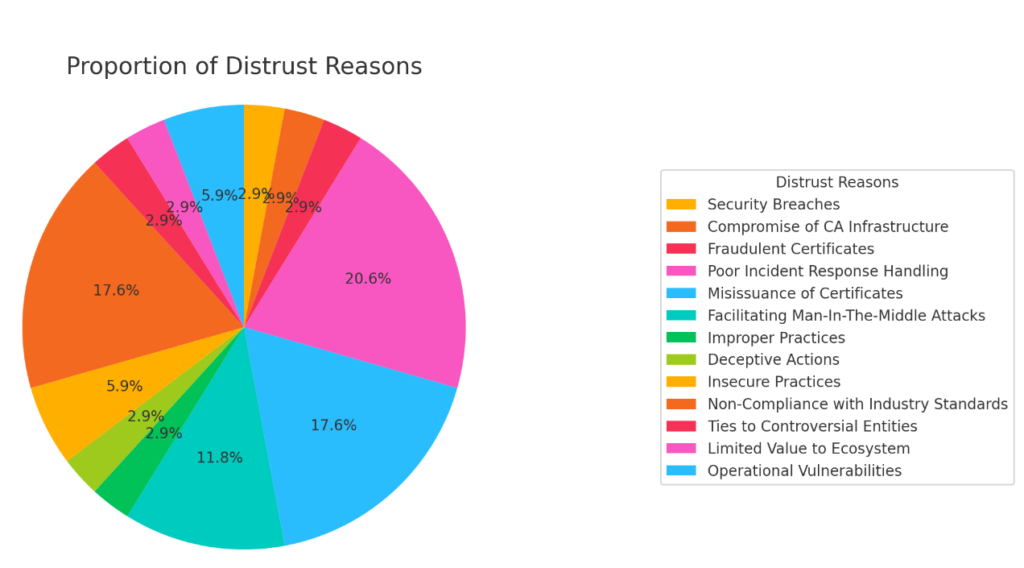

To be clear, HSMs are useful for securing cryptographic keys from theft and are essential in several high-stakes scenarios. For example, when keys need to live for long periods and be managed independently of individuals in an organization who may come and go, and when physical theft of keys is a concern, HSMs are crucial for a sustainable solution. A great example of this is the key material associated with a root Certificate Authority or cryptocurrency wallet. These keys seldom change, live for many years, and must survive many risks that many other use cases do not face.

Beyond key storage, in some cases, HSMS can be used as part of a larger security system where the consumption of key material is a small part of the security operation. In these cases, code that will be executed within an HSM is written to enable them to be part of how the overall system delivers abuse protection. For example, Apple has developed code that runs on HSMs to help iPhone users recover their accounts with reduced exposure to attacks from Apple staff. Some cryptocurrency companies implement similar measures to protect their wallets. In these use cases, the HSM is used as a trusted execution environment, a stronger confidential computing-like capability, for the TCB of a larger software system. This is achieved by running code on the HSM that exposes a higher-level transactional interface with constraints such as quorums, time-of-day restrictions, rate limiting, or custom workload policies. These solutions often generate the message to be signed or encrypted in the HSM and then use a key protected within the HSM to sign or encrypt that artifact.

HSMs are also often mandated in some environments, largely for historical reasons, but they’re required nonetheless. The impracticality of this requirement has led to modifications in the security model offered in cloud HSMs over their traditional designs, these modifications weaken the original security guarantees that HSMs were expected to deliver to enable modern systems to continue their use. For example, HSMs originally required operators to bring the HSMs back up after a power failure using physically inserted tokens or smart cards and pins, but now they can be configured to automatically unlock. Additionally, the use of HSMs in the cloud is now often gated by simple API keys rather than smart cards or other asymmetric credentials bound to the subject using the key. With all this said, requirements are requirements, and many industries like finance, healthcare, and government have requirements such as FIPS 140-2 Level 2+ and Common Criteria protection of keys which lead to mandated use of HSMs even when they may not be the most appropriate answer to how to protect keys.

The Answer: Last Mile Key and Credential Management

While HSMs provide essential protection for cryptographic keys from theft, for many use cases they fall short in preventing the misuse of keys and credentials. To address this gap, organizations also need robust last-mile key and credential management to complement HSMs, ensuring the entire lifecycle of a key is secured. Video game companies do it, media companies do it, and so should the software and services we rely on to keep our information safe.

- Key Isolation and Protection: Protect keys from the workloads that use them by using cryptographic access controls and leveraging the security capabilities provided by the operating system.

- Dynamic Credential Management: Implement systems that automatically rotate credentials and API keys. This limits the value of exfiltrated credentials and keys to an attacker.

- Granular Access Controls: Implementing strong attested authentication of the workload utilizing the keys enabling access controls to ensure that only authorized entities can access the cryptographic keys.

- Enhanced Visibility and Auditing: Integrate solutions that provide detailed visibility into how and where keys and credentials are used. Enabling detection usage anomalies, and quick impact assessments to security incidents.

- Automated Lifecycle Management: Utilize automated tools to manage the entire lifecycle of keys and credentials, from creation and distribution to rotation. Increasing confidence in your ability to roll keys when needed.

This combination of approaches not only protects keys and credentials from theft and reduces their value to attackers but also ensures their proper and secure use, which turns key management into more of a risk management function. A good litmus test for effective key management is whether, in the event of a security incident, you could rotate keys and credentials in a timely manner without causing downtime, or assess with confidence that the keys and credentials were sufficiently protected throughout their lifecycle so that a compromise of an environment that uses cryptography does not translate to a compromised key.

Thinking more holistically about the true key lifecycle and its threat model can help ensure you pass these basic tests.