One of the most complicated things to troubleshoot in X.509 is failures related to Name Constraints handling, there are a few ways to approach this but one of the easiest is to use the Extended Error Information in the Certificate viewer.

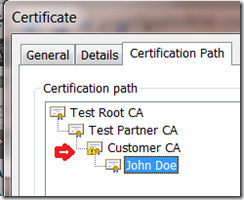

Lets walk through an exercise so you can try using this on your own, first download this script, if you extract its contents and run makepki.bat you get a multi-level PKI that looks something like this:

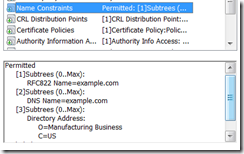

Before we begin its useful to understand that Name Constraints are applied at an Issuing CA level, in this case the constraints say that the CA is authoritative for any DNS or RFC822 name within in the example.com domain, it also allows a specific base distinguished name (DN).

In our example these constraints are applied by the “Test Partner CA” on to the “Customer CA”; you can see the restrictions in its certificate:

This script creates two certificates, one for email (user.cer) and another for SSL (www.cer), normally the script makes both of these certificates fall within the example.com domain space but for the purpose of this post I have modified the openssl.cfg to put the email certificate in the acme.com domain. — The idea here is that since this domain is not included in the constraints a RFC 3280 compliant chain engine will reject it and we have an error to diagnose.

Normally we don’t already know what the failure associated with a certificate chain is, after all that’s why we are debugging it but the process we use here will help us figure out the answer — applications are notorious for sharing why a certificate chain was rejected.

Since we can’t rely on the applications one way for us to figure this out on our own is to look at the chain outside of the application in the Windows Certificate viewer to see if we can tell what the issue is there.

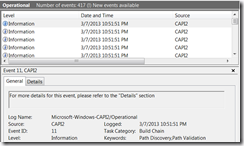

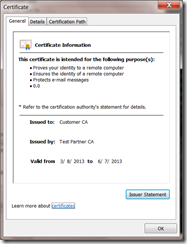

Let’s start by looking at the “good” certificate:

What’s important here is that we see no errors, but how do we know that? Well let’s look at the bad certificate and see what the difference is:

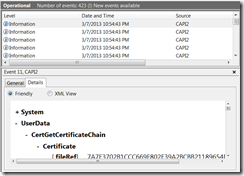

The certificate clearly has a problem and Windows has done a decent job of telling us what the problem was, there is a name in this certificate that is inconsistent with the Name Constraints associated with this certificate chain. The question is — which name?

To answer that question we need to look at the Name Constraints, remember that this is in the CA certificates it can be in any or all of them which means we need to first figure out where the Constraints are.

To do that we start at the “Certification Path”, here you see something like this:

Notice the little yellow flag, that’s the certificate viewer saying this is where the problem is, let’s take a look at that certificate:

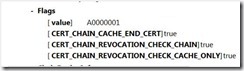

But wait! There isn’t a problem with this one, that’s because this certificate is actually good it’s the certificate it issued that is the problem. Here is the non-obvious part, let’s look at the details tab:

Here we see Windows is telling us exactly what the problem was with the certificate that this CA has issued, it is the email address and its inclusion of the acme.com domain.

Remember that it was restricted, via the Name Constraints extension, to the example.com domain when issuing email certificates. The chain engine would be non-compliant with the associated RFC if it trusted this certificate.

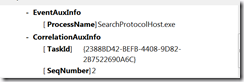

But why did we see this Extended Error Information in this certificate and not the leaf itself? This is actually by design and makes sense when you think about it. Name Constraints can exist in each issuing CA certificate in the chain and what is happening here is that CryptoAPI is telling us that the issuer certificate that was flagged is the offending actor.

This Extended Error Information is available in the Certificate Viewer in a number of cases (for example Revocation), though most of the interesting cases require the application to launch the certificate viewer as you get to view the certificate chain (and all of the associated state) that they were using when they encountered the problem.

![clip_image001[6] clip_image001[6]](http://unmitigatedrisk.com/wp-content/uploads/2013/03/clip_image0016_thumb.png)

![clip_image002[6] clip_image002[6]](http://unmitigatedrisk.com/wp-content/uploads/2013/03/clip_image0026_thumb.png)

![clip_image003[6] clip_image003[6]](http://unmitigatedrisk.com/wp-content/uploads/2013/03/clip_image0036_thumb.png)